binfalse

Adding a hyperlink to Java Swing GUI

January 3rd, 2011Just dealt with an annoying topic: How to add a link to a Java swing frame. It’s not that hard to create some blue labels, but it’s a bit tricky to call a browser browsing a specific website…

As I mentioned the problem is to call the users web browser. Since Java SE 6 they’ve added a new class called Desktop. With it you may interact with the users specific desktop. The call for a browser is more than simple, just tell the desktop to browse to an URL:

java.awt.Desktop.getDesktop ().browse ("binfalse.de")Unfortunately there isn’t support for every OS, before you could use it you should check if it is supported instead of falling into runtime errors..

if (java.awt.Desktop.isDesktopSupported ())

{

java.awt.Desktop desktop = java.awt.Desktop.getDesktop ();

if (desktop.isSupported (java.awt.Desktop.Action.BROWSE))

{

try

{

desktop.browse (new java.net.URI ("binfalse.de"));

}

catch (java.io.IOException e)

{

e.printStackTrace ();

}

catch (java.net.URISyntaxException e)

{

e.printStackTrace ();

}

}

}So far.. But what if this technique isn’t supported!? Yeah, thats crappy ;) You have to check which OS is being used, and decide what’s to do! I searched a little bit through the Internet and developed the following solutions:

String url = "";

String osName = System.getProperty("os.name");

try

{

if (osName.startsWith ("Windows"))

{

Runtime.getRuntime ().exec ("rundll32 url.dll,FileProtocolHandler " + url);

}

else if (osName.startsWith ("Mac OS"))

{

Class fileMgr = Class.forName ("com.apple.eio.FileManager");

java.lang.reflect.Method openURL = fileMgr.getDeclaredMethod ("openURL", new Class[] {String.class});

openURL.invoke (null, new Object[] {url});

}

else

{

//check for $BROWSER

java.util.Map<String, String> env = System.getenv ();

if (env.get ("BROWSER") != null)

{

Runtime.getRuntime ().exec (env.get ("BROWSER") + " " + url);

return;

}

//check for common browsers

String[] browsers = { "firefox", "iceweasel", "chrome", "opera", "konqueror", "epiphany", "mozilla", "netscape" };

String browser = null;

for (int count = 0; count < browsers.length && browser == null; count++)

if (Runtime.getRuntime ().exec (new String[] {"which", browsers[count]}).waitFor () == 0)

{

browser = browsers[count];

break;

}

if (browser == null)

throw new RuntimeException ("couldn't find any browser...");

else

Runtime.getRuntime ().exec (new String[] {browser, url});

}

}

catch (Exception e)

{

javax.swing.JOptionPane.showMessageDialog (null, "couldn't find a webbrowser to use...\\nPlease browser for yourself:\\n" + url);

}Combining these solutions, one could create a browse function. Extending the javax.swing.JLabel class, implementing java.awt.event.MouseListener and adding some more features (such as blue text, overloading some functions…) I developed a new class Link, see attachment.

Of course it is also attached, so feel free to use it on your own ;)

Unfortunately I’m one of these guys that don’t have a Mac, shame on me! So I just could test these technique for Win and Linux. If you are a proud owner of a different OS please test it and let me know whether it works or not. If you have improvements please tell me also.

Revived iso2l

January 2nd, 2011Someone informed me about a serious bug, so I spent the last few days with rebuilding the iso2l.

This tool was a project in 2007, the beginning of my programming experiences. Not bad I think, but nowadays a pharmacist told me that there is a serious bug. It works very well on small compounds, but the result is wrong for bigger molecules.. Publishing software known to fail is not that nice and may cause serious problems, so I took some time to look into our old code…

Let me explain the problem on a little example. Let’s denote the atom Carbon \(\text{C}\) has two isotopes. The first one \(\text{C}^{12}\) with a mass of 12 and an abundance of 0.9 and another one \(\text{C}^{13}\) with a mass of 13 and an abundance of 0.1 (these numbers are only for demonstration). Let’s further assume a molecule \(\text{C}_{30}\) consisting of 30 Carbon atoms. Since we can choose from two isotopes for each position in this molecule and each position is independent from the others there are many many possibilities to create this molecule from these two isotopes, exactly \(2^{30}=1073741824\). Let’s for example say we are only using 15 isotopes of \(\text{C}^{12}\) and 15 isotopes of \(\text{C}^{13}\), there still exists \(\frac{30!}{15!\cdot 2} = 155117520\) combinations of these elements. Each combination has an abundance of \(0.9^{15} \cdot 0.1^{15} \approx 2.058911 \cdot 10^{-16}\).

Unfortunately in the first version we created a tree to calculate the isotopic distribution (here it would be a binary tree with a depth of 10). If a branch has an abundance smaller than a threshold it’s cut to decrease the number of calculations and the number of numeric instabilities. If this thresh is here \(10^{-10}\) none of these combinations would give us a peak, but if you add them all together (they all have the same mass of \(15 \cdot 12 + 15 \cdot 13 = 375\)) there would be a peak with this mass and an abundance of \(\frac{30!}{15!\cdot 2} \cdot 0.9^{15} \cdot 0.1^{15} \approx 3.193732 \cdot 10^{-8} > 10^{-10}\) above the threshold. Not only the threshold is killing peaks. This problem is only shifted if you decrease the thresh or remove it, because you’ll run in abundances that aren’t representable for your machine, especially in larger compounds (number of carbons > 100). So I had to improve this and rejected this tree-approach.

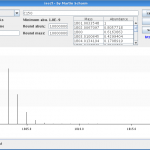

And while I’m touching the code, I translated the tool to English, increased the isotopic accuracy and added some more features. In figure 1 you can see the output of version 1 for \(\text{C}_{150}\). As I told this version loses many peaks. I contrast you can see the output of version 2 for the same compound in figure 2. Here the real isotopic cluster is visible.

Please try it out and tell me if you find further bugs or space for improvements or extensions!

FM-Contest: Mappings

December 22nd, 2010As you know, I took part in the programming contest organized by freiesMagazin. I still presented the tactics of my bot, here is how I parse the maps.

The maps are very simple, there are just two types of fields: wall and floor. Walls aren’t accessible and block views. Bots can just move through floor-fields, but these fields are toxic, so they’ll decrease the health of a bot. This toxicity should be kept in mind while programming an AI, but shouldn’t play any role in this article. Here is an example how a map is presented to the bot (sample map provided by the organizers):

######################################################################

# # # #

# # #

# # ################### # ####

# # # # # #

# # # # # #

####### ####### # # # #

# # ################# ###### #### #

# # # # # ########### #

####### #### # # # # #

# # # # # ####################### #

# # # ################# ## #

# # #

# # #

## ############## ################ ############# ###### ###

# # # # # #

# # # # # #

# #### #### # # # # #

# # # # # # # #

# # # # ###### #

# # # # # # ###### ###

# # # # # # #

# ######### #################################### #############

## # # # # # #

# # # # #

# ######## # # # #

# # #

# # # # #

# # # # # #

######################################################################The number sign (#) identifies walls, spaces are floor fields. The task is to get the bot understanding the maps topology. It’s a big goal if you can split the map to areas or rooms to know whether you’re in the same room as an opponent and what’s the best possibility to change the room. I’ll explain my statements on a very simple example:

#######################

# #

# #

# #

# #

# #

# ##########

# # #

# #

# # #

# # #

#######################You’ll immediately see there are two rooms, one major one and a small lumber room at the bottom right corner of the map. But how should the bot see it!?

My first attempt was to build rooms based on horizontal and vertical histograms of wall-fields. Something like image processing.. This attempt was fast rejected, inefficient and not rely good working.

The second idea was to sample way-points to the map and building rooms based on visibility between these points. I’ve read that autonomous vacuum cleaners are working based on this technique ;-)

One night later I found a much better solution, it’s based on divisive clustering. Starting with the whole map repeat the following steps recursively:

- Is this part of the map intersected by a wall-field?

- YES: If this part is larger than a threshold split this part of the map in four parts with ideally same size and repeat this algorithm with each part, otherwise stop

- NO: We found a room! Label all its fields and try to connect it to the left, right, bottom and top if there are no walls intersecting

If this is done, we’ve found the main parts of each room. After wards I try to expand each room to neighbor fields that are unlabeled and doesn’t have neighbors with different labels. Fields that are connected to more than one room are doors.

To explain the idea of the algorithm I’ll present the procedure on my small example. At the beginning there are of course intersecting wall-fields, so it is split into four parts. Three of them aren’t intersected anymore, so they are labeled:

#######################

#111111111222222222222#

#111111111222222222222#

#111111111222222222222#

#111111111222222222222#

#111111111222222222222#

#333333333 ##########

#333333333 # #

#333333333 #

#333333333 # #

#333333333 # #

#######################Since the rooms with label 1, 2 and 3 are connected and not intersected by a wall-field, they are merged together:

#######################

#333333333333333333333#

#333333333333333333333#

#333333333333333333333#

#333333333333333333333#

#333333333333333333333#

#333333333 ##########

#333333333 # #

#333333333 #

#333333333 # #

#333333333 # #

#######################But the fourth part contains wall fields, so it is split into four more parts. Three of them are still intersected, but one gets labeled:

#######################

#333333333333333333333#

#333333333333333333333#

#333333333333333333333#

#333333333333333333333#

#333333333333333333333#

#333333333 ##########

#333333333 # #

#333333333 44444#

#333333333 # 44444#

#333333333 # 44444#

#######################Splitting the remaining parts give to small rooms, so they are rejected by the size-threshold. So we try to expand the found rooms and end in the following situation:

#######################

#333333333333333333333#

#333333333333333333333#

#333333333333333333333#

#333333333333333333333#

#333333333333333333333#

#333333333333##########

#333333333333#44444444#

#333333333333D44444444#

#333333333333#44444444#

#333333333333#44444444#

#######################Here D represents a door. Doors are saved separately to remember how rooms are connected and know the possibilities of fleeing out of a room if a predator comes in to catch you…

All this parsing is done in a separate thread, so the bot is able to do it’s work even if the maps are large and take a long time to parse… Of course here is a lot of space for improvements, but for this contest it is enough I think.

Unfortunately I cant recommend further readings, I don’t know any previous work like this.

Yeah, Top Ten!

December 21st, 2010As I announced in my previous article, I took part in a contest. And, what should I say, I’m one of the six best programmer - worldwide!

Ok, ok - the contest has just started, there aren’t any results yet, but they already announced only six bots are facing off. It’s a pity that there aren’t more people taking place in this game, but however, the lesser opponents the easier it is to conquer a place on the podium :-P

Good luck for everyone participating!

FM-Contest: Tactics

December 21st, 2010As I announced in my last articles I’ve submitted a programmed bot for the contest organized by freiesMagazin. Here I present my bots tactics.

The submitted bots are grouped to teams. On one hand the BLUE team, or preys, they have to run and hide. On the other hand the RED team, or predators, their task is to catch a bot of the BLUE team. If anyone of the BLUE team got captured by a RED team member he changes the team from BLUE to RED. If there was no teamchange for a longer time, one of the BLUE team is forced to change. That are the simplified tasks, for more details visit their website. All in all if you are BLUE run and hide, otherwise hunt the BLUE’s.

The map is very simple organized, there are wall-fields and floor-fields. You are neither able to access wall-fields nor to see through such fields. Floor-fields are acessible, but toxic. So they decrease your health status. You can look in each of eight directions: NORTH, NORTH_WEST, WEST, SOUTH_WEST, SOUTH, SOUTH_EAST, EAST, NORTH_EAST. You’ll only see enemies that are standing in the direction you are looking to (180°) and don’t hide behind a wall. Positions of your team members are told by communicators, so you’ll know where your team is hanging around. In each round you’re able to walk exactly one field in one of eight directions, and you can choose where to look for the next turn.

That are the basics. How does my bot handle each situation? In general with every turn my bot tries to look in the opposite direction. To see at least every second round all see-able enemies (those that are not behind a wall).

Suppose to be a BLUE team member, my bot first checks whether it has seen a RED one within the last rounds. If there was an enemy the bot of course has to run! That is, my bot holds a so called distance map for every opponent. This map tells my bot how many turns each opponent needs to reach a single point on the map. These distance maps are created via an adaption of Dijkstra’s algorithm [Dij59]. So in principle the bot could search for a position on the map, that it reaches before any of the enemies can reach it. But there is a problem. Consider a room you’re hiding in, that has two doors, and an enemy is entering this room through one of these doors. Finding the best field concerning these distance maps means in too many times running in the opposite direction of the enemies door. Hence, you’ll reach a blind alley and drop a brick. So it might be more promising to first check the doors whether you are faster as your enemy or not and decide afterward where to go. Unfortunately there are no doors defined on the map, but I’ll explain in a next article how to parse the map.

If my bot is BLUE and no RED bot is in sight he calls one random room out of a multinomial distribution of rooms. Here each rooms gets a score resulting from its position. The score is bigger the shorter the distance to the room and the higher the distance from this room to the center of the map (try to remain on the sidelines). These scores are normalized, you can understand them as a multinomial distribution. So the bot is searching for a room that is near and in a corner of the map. Of course random, cause enemies might search for it in optimal rooms ;-) If that room is reached the bot will hang around there (as far away as possible from doors and at positions with minimal toxicity) until a teammate has change the team (this bot still knows where we are) or at least every 20 rounds to raise the entropy in the motion profile. While these room changes the bot is very vulnerable to loitering enemies, but nevertheless it might be a weaker victim if it stays in one and the same room all the time…

On the other side of life, when the bot is RED, there is no time for chilling out in a room far away from all happenings. It should be inside, not only near by. If it’s seeing a BLUE bot he’s of course following and tries to catch it. Here would be space to predict flee-possibilities of our victims to cut their way. But due to a lack of time this bot is simply following it’s victims. Nobody visible? Go hunting! The bot tries to find the prey that was last seen on it’s position where we’ve seen it. That’s the next place for advantages. I’m tracking motion profiles of any opponent, here one could use these information to predict a better position for the opponents (for example using neural networks or something like this)… If no enemy can be found my bot is canvassing all rooms of the map, trying to find anyone to catch. Within this exploration of the map it is always interested in going long ways through the map. The more rooms it’s visiting the higher the probability to find someone..

Each turn is limited to a maximum of five seconds to decide what to do. In my first attempt I tried to process these tactics by threading. The idea was to hold the main tool in a loop, testing whether 5 secs are over or the AI has finished searching for a next turn. So the AI could decide for different proceedings within this time, based on scores, and this algorithm would be something like anytime. That means anytime you’ll stop it there is a decision for a next step, the later you stop it the better the decision. Due to the simplicity of my AI I decided against this procedure. So the possible actions are processed without threads. But if you take a look at the code, you’ll find a lot of rudiments remaining, didn’t had the leisure to clean up…

References

- [Dij59]

- Edsger W. Dijkstra A note on two problems in connexion with graphs Numerische Mathematik, 1(1):269–271–271, December 1959. http://www-m3.ma.tum.de/twiki/pub/MN0506/WebHome/dijkstra.pdf